When we talk about AI chips, Nvidia is usually the first name that comes to mind. However, Google has quietly built one of the most capable AI silicon lineups in the industry through its homegrown Tensor Processing Unit program. At Cloud Next 2026, the company introduced its eighth-generation TPUs, and this time it took a different approach entirely.

Rather than releasing a single flagship chip, Google split the generation into two purpose-built designs: the TPU 8t for training and the TPU 8i for inference. The company developed both chips in partnership with Google DeepMind.

“Our eighth-generation TPUs are the culmination of more than a decade of development,” said Amin Vahdat, Google’s SVP and chief technologist for AI and infrastructure.

The training chip: TPU 8t

Google designed the TPU 8t as a workhorse for large-scale model training, with the stated goal of cutting frontier model development cycles from months to weeks.

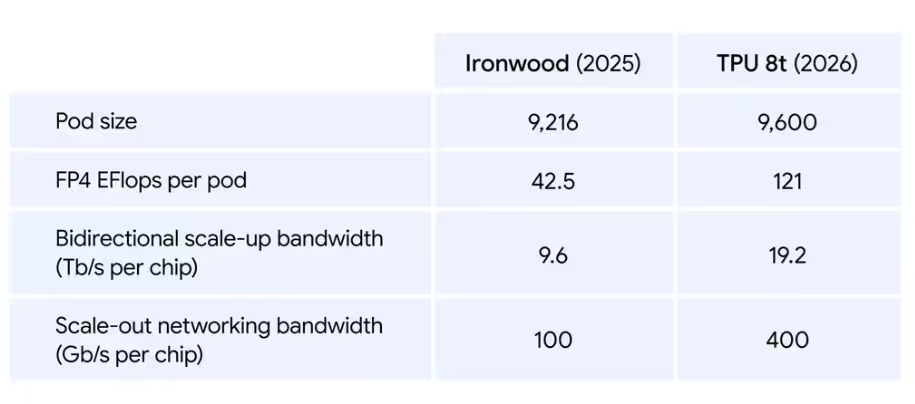

A single TPU 8t superpod scales to 9,600 chips and offers two petabytes of shared high-bandwidth memory (HBM), with double the interchip bandwidth of the previous generation, Ironwood. The architecture delivers 121 exaflops of FP4 compute performance, with per-pod compute performance nearly tripling compared to Ironwood.

To support that scale, Google built a new networking architecture called Virgo Network. It delivers a 4x increase in data center bandwidth using high-radix switches that reduce network layers. Combined with JAX and Google’s Pathways software, Virgo enables near-linear scaling to more than 1 million TPU chips in a single logical training cluster. A single Virgo fabric can link over 134,000 TPU 8t chips with up to 47 petabits per second of non-blocking bi-sectional bandwidth, delivering more than 1.6 million exaflops overall.

Google also introduced TPUDirect RDMA and TPU Direct Storage in the 8t. TPUDirect RDMA moves data directly between HBM and network interface cards, bypassing the host CPU entirely to cut latency. TPU Direct Storage does the same for managed storage access, effectively doubling bandwidth for large data transfers, according to the company.

On cost, Google claims the TPU 8t delivers up to 2.7x better performance-per-dollar compared to Ironwood for large-scale training workloads.

The inference chip: TPU 8i

The TPU 8i targets the other side of the AI pipeline, serving models fast and at scale for concurrent users and agents.

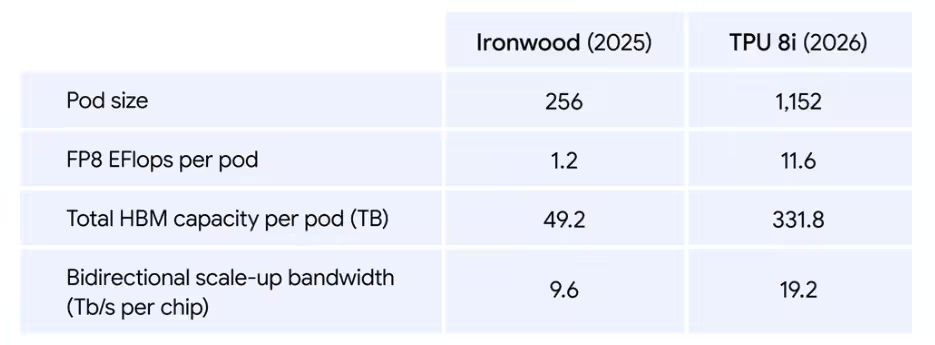

A single TPU 8i pod scales to 1,152 chips with 331.8TB of total HBM capacity and 11.6 exaflops of FP8 compute performance. According to Alphabet CEO Sundar Pichai, the chip can “deliver the massive throughput and low latency needed to concurrently run millions of agents cost-effectively.”

Google said it redesigned the full stack around the TPU 8i to eliminate what it calls the “waiting room” effect, where user requests queue up while hardware sits underutilized. The company addressed this through four specific changes.

First, the chip pairs 288GB of HBM with 384MB of on-chip SRAM, three times more than Ironwood, keeping a model’s active working set entirely on-chip and preventing processors from sitting idle. Second, Google doubled the physical CPU hosts per server by moving to its custom Axion Arm-based processors.

Third, for Mixture of Experts (MoE) models, Google doubled the Interconnect (ICI) bandwidth to 19.2Tbps and introduced a new custom network topology called Boardfly ICI. It interconnects up to 1,152 chips and cuts the number of hops required for all-to-all communication by up to 50%, reducing network latency significantly for large model inference.

Fourth, a new on-chip Collectives Acceleration Engine (CAE) offloads the reduction and synchronization operations required during autoregressive decoding and chain-of-thought reasoning, cutting on-chip latency by up to 5x.

The result, according to Google, is 80% better performance-per-dollar compared to Ironwood, which the company says translates to serving nearly twice the customer volume at the same cost.

Google TPU 8t and 8i: 2x Better Performance Per Watt Than Ironwood

Both the TPU 8t and 8i run on Google’s Axion Arm-based CPU host and support liquid cooling. Google said it optimized power management across the entire stack, with integrated systems that dynamically adjust power draw based on real-time demand.

The company claims both chips deliver up to 2x better performance-per-watt compared to Ironwood.

“By owning the full stack, from Axion host to accelerator, we can optimize system-level energy efficiency in ways that simply cannot be achieved when the host and chip are designed independently,” Vahdat said.

Availability

Both chips will reach general availability later in 2026. Google will offer them through its AI Hypercomputer, the cloud-based supercomputer architecture it launched in 2023 that combines performance-optimized hardware, open software, ML frameworks, and flexible consumption models.

Unlike Nvidia, which sells its chips to a wide range of companies and research institutions, Google reserves its TPU lineup for Google Cloud customers. The company has also confirmed it will be among the first cloud providers to offer NVIDIA Vera Rubin NVL72 systems, indicating it plans to run its own silicon alongside Nvidia hardware rather than as a replacement.